AI agents need memory. Not just a conversation buffer that disappears after each session — real, persistent memory that learns from every interaction, connects facts across documents, and retrieves exactly the right context when the agent needs it.

That’s what Cognee does. It’s an open-source AI memory engine with 14,600+ GitHub stars, $7.5M in seed funding, and 70+ companies using it in production. Cognee ingests data in any format, builds a knowledge graph using cognitive science approaches, and gives AI agents the ability to search across both vector embeddings and graph relationships.

ArcadeDB is now available as a graph database backend for Cognee — and its multi-model architecture makes it uniquely suited for the job.

What Cognee Does

Cognee’s API is intentionally minimal. Three functions cover the entire pipeline:

import cognee

await cognee.add("your data here") # Ingest documents, text, or URLs

await cognee.cognify() # Build the knowledge graph

await cognee.search("your query") # Search across graph + vectors

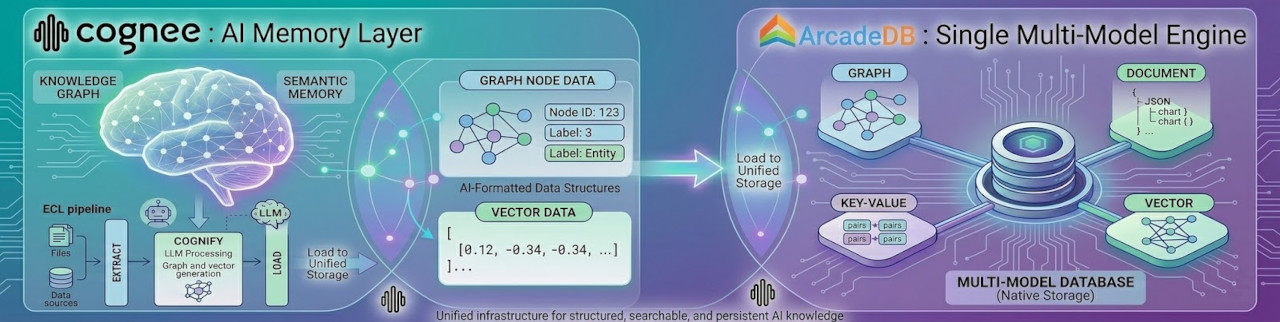

Under the hood, Cognee extracts entities and relationships from your data, builds a knowledge graph, generates vector embeddings, and stores everything for retrieval. When an agent searches, Cognee combines graph traversal with vector similarity to return contextually rich results — not just the closest embedding match, but the connected facts around it.

This architecture requires two database backends: a graph database for entities and relationships, and a vector store for embeddings. Most Cognee deployments use separate databases for each — for example, Neo4j for graphs and Qdrant for vectors.

ArcadeDB handles both in a single engine.

Why ArcadeDB

ArcadeDB is a multi-model database that natively supports graphs, documents, key/value, time series, full-text search, and vector embeddings. For Cognee, this means:

One database instead of two (or three). ArcadeDB stores your knowledge graph and your vector embeddings in the same engine. No need to synchronize data between a graph database and a separate vector store. No additional infrastructure to deploy, monitor, and maintain.

Native graph performance. ArcadeDB isn’t a graph layer on top of a relational engine. It uses a native graph storage model with direct record links — no index lookups for traversals. On the LDBC Graphalytics benchmark, ArcadeDB is up to 9x faster than KuzuDB (Cognee’s previous default) on algorithms like PageRank and BFS.

ArcadeDB is faster on every LDBC Graphalytics algorithm and up to 25x faster on LSQB subgraph pattern matching queries. Full benchmark results are available on GitHub.

OpenCypher compatibility. ArcadeDB passes 97.8% of the official Cypher Technology Compatibility Kit. The Cognee adapter uses standard Cypher queries over the Bolt protocol — the same protocol and query language used by Neo4j. No proprietary APIs.

Apache 2.0, forever. ArcadeDB is fully open source under the Apache 2.0 license, with a public commitment to never change it. After KuzuDB’s acquisition by Apple and subsequent archival, licensing stability matters more than ever.

Setting Up ArcadeDB with Cognee

1. Start ArcadeDB with Bolt enabled

docker run -d --name arcadedb -p 2480:2480 -p 7687:7687 \

-e JAVA_OPTS="-Darcadedb.server.rootPassword=arcadedb \

-Darcadedb.server.defaultDatabases=cognee[root]{} \

-Darcadedb.server.plugins=Bolt:com.arcadedb.bolt.BoltProtocolPlugin" \

arcadedata/arcadedb:latest

This starts ArcadeDB with the Bolt protocol on port 7687 and automatically creates a cognee database.

2. Install the Cognee ArcadeDB adapter

pip install cognee cognee-community-graph-adapter-arcadedb

3. Configure Cognee to use ArcadeDB

Set your environment variables:

GRAPH_DATABASE_PROVIDER="arcadedb"

GRAPH_DATABASE_URL="bolt://localhost:7687"

GRAPH_DATABASE_USERNAME="root"

GRAPH_DATABASE_PASSWORD="arcadedb"

Or configure programmatically:

import cognee

cognee.config.set_graph_database_provider("arcadedb")

cognee.config.set_graph_db_config({

"graph_database_url": "bolt://localhost:7687",

"graph_database_username": "root",

"graph_database_password": "arcadedb",

})

That’s it. From this point, every cognee.add(), cognee.cognify(), and cognee.search() call uses ArcadeDB as the graph backend.

4. Build and query a knowledge graph

import cognee

# Ingest some data

await cognee.add("ArcadeDB is a multi-model database that supports graph, "

"document, key/value, time series, and vector data models. "

"It is open source under the Apache 2.0 license.")

# Build the knowledge graph

await cognee.cognify()

# Search with combined graph + vector retrieval

results = await cognee.search("What data models does ArcadeDB support?")

for result in results:

print(result)

Cognee extracts entities (ArcadeDB, Apache 2.0, graph, document, etc.), builds relationships between them, generates embeddings, and stores everything in ArcadeDB. When you search, Cognee traverses the graph and runs vector similarity — returning results that understand both semantic meaning and structural relationships.

The Multi-Model Advantage

Most AI memory systems treat graphs and vectors as separate concerns with separate databases. This creates real problems:

- Data synchronization. Entities in the graph must stay in sync with their vector representations. Two databases means two sources of truth.

- Operational complexity. Two databases to deploy, scale, back up, and monitor. Two sets of connection pools, credentials, and failure modes.

- Query-time overhead. A search that needs both graph context and vector similarity requires two round-trips to two different systems.

ArcadeDB eliminates this split. A single node in ArcadeDB can be a graph vertex with edges to other entities and carry a vector embedding for similarity search and store document properties — all queryable in a single query. This is what multi-model means in practice: not just supporting multiple APIs, but storing and querying multiple data representations in a single, consistent engine.

For Cognee’s architecture specifically, this means the knowledge graph and the vector index live in the same database, on the same data. No synchronization layer. No eventual consistency between two systems. One transactional engine.

What’s Next

The ArcadeDB adapter for Cognee is available today as a community package. We’re working with the Cognee team to:

- Expand the integration to cover ArcadeDB’s vector search capabilities directly within the Cognee pipeline

- Optimize graph construction queries for ArcadeDB’s native traversal performance

- Make ArcadeDB a first-class backend option in Cognee’s documentation and getting started guides

If you’re building AI agents that need structured, persistent memory — or if you’re looking for a single database to replace a graph DB + vector store combination — give ArcadeDB + Cognee a try.

Get started: