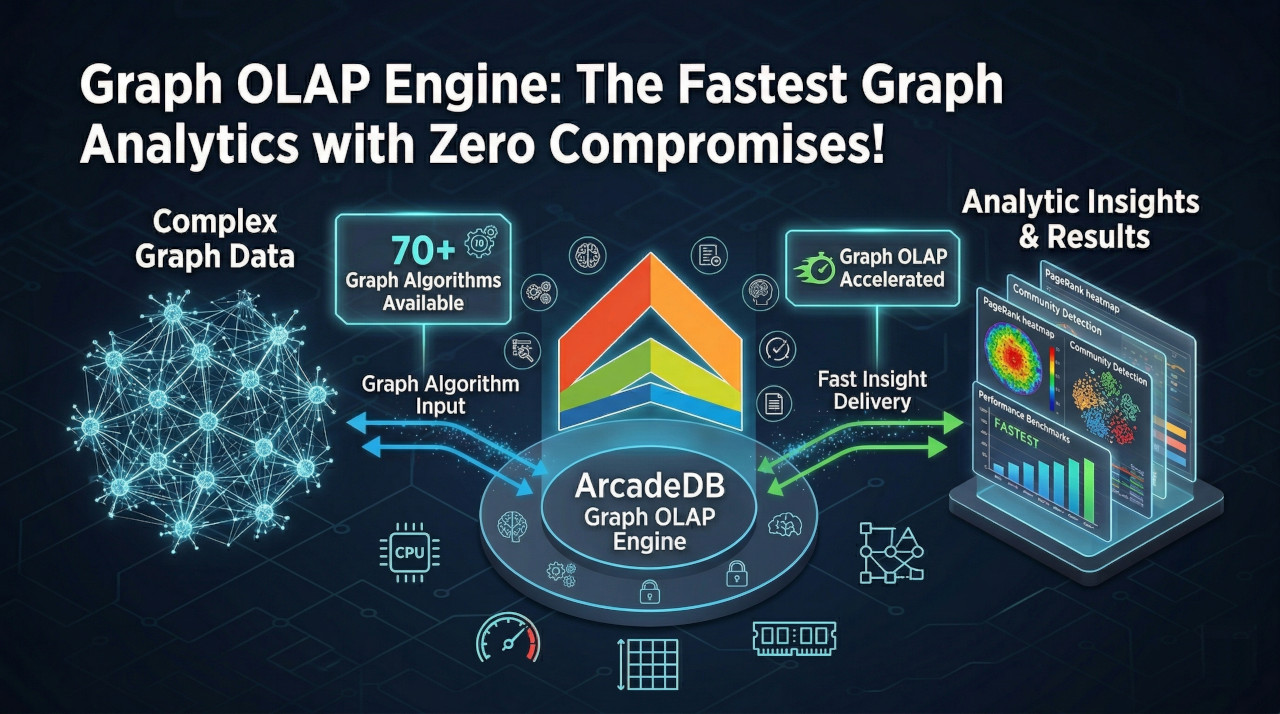

ArcadeDB has always been the fastest OLTP graph database. But we asked ourselves: what if we could make analytical queries — PageRank, connected components, multi-hop traversals — up to 462x faster, without giving up a single byte of transactional performance?

With ArcadeDB v26.3.2, we’re introducing the Graph OLAP Engine — and the answer is yes. Zero compromises.

The Problem with OLTP for Analytics

ArcadeDB’s OLTP engine is built for speed: point lookups, single-record mutations, ACID transactions — it handles all of this faster than any other graph database. But analytical workloads are a different beast. When you run PageRank or community detection, you’re accessing millions of edges in tight loops. The row-oriented, pointer-chasing nature of OLTP storage hits three walls:

- Cache misses: every edge lookup follows a RID pointer to a different page in memory

- Object overhead: every vertex and edge is a Java object with ~48 bytes of overhead

- GC pressure: traversals create millions of short-lived objects that hammer the garbage collector

These are fundamental limitations of any OLTP storage engine, not just ours. The traditional answer has been to export your data to a separate analytics system. We think that’s unacceptable.

Graph Analytical Views: OLAP That Lives Inside Your Database

The Graph OLAP Engine introduces Graph Analytical Views (GAV) — a read-optimized, columnar representation of your graph that lives alongside your live OLTP data. You create a view, and the engine keeps it synchronized with every write.

Here’s how simple it is:

CREATE GRAPH ANALYTICAL VIEW social

VERTEX TYPES (Person, Company)

EDGE TYPES (FOLLOWS, WORKS_AT)

PROPERTIES (name, age, status)

UPDATE MODE SYNCHRONOUS

Or if you want a view over your entire graph:

CREATE GRAPH ANALYTICAL VIEW fullGraph

That’s it. From this moment on, your Cypher and SQL queries are automatically accelerated by the OLAP engine. No query changes needed — the optimizer detects the GAV and transparently substitutes OLTP traversal operators with CSR-based ones.

How It Works Under the Hood

Compressed Sparse Row (CSR) Encoding

Instead of pointer-chasing through pages, the OLAP engine encodes your entire graph topology as flat int[] arrays using CSR encoding:

Forward CSR (outgoing edges):

offsets: [0, 3, 5, 8, ...] ← one entry per vertex + sentinel

neighbors: [1, 5, 7, 2, 6, ...] ← dense neighbor IDs, contiguous per source

Neighbors of vertex v = neighbors[offsets[v] .. offsets[v+1])

Out-degree of vertex v = offsets[v+1] - offsets[v] ← O(1)

Both forward (OUT) and backward (IN) CSR indexes are maintained for bidirectional traversal. This layout is cache-line friendly — sequential memory access means the CPU prefetcher does the work for you. Zero object allocation during traversal. SIMD-friendly vectorized operations.

Columnar Property Storage

Properties are stored as typed flat arrays — int[], long[], double[], or dictionary-encoded int[] for strings. Each column has a compact null bitmap using just 1 bit per vertex. Dictionary encoding achieves near-100% compression for low-cardinality fields like status, category, or tag.

Three Synchronization Modes

The key design decision was making the OLAP engine coexist with OLTP, not replace it. You choose how writes propagate:

| Mode | Behavior | Staleness | Best For |

|---|---|---|---|

| SYNCHRONOUS | Applies overlay on each commit | Zero — writes reflected immediately | Real-time analytics |

| ASYNCHRONOUS | Background rebuild on commit | Brief window during rebuild | Large graphs, eventual consistency |

| OFF | Manual rebuild only | Until you rebuild | Batch analytics, static snapshots |

In SYNCHRONOUS mode, the engine captures transaction deltas and merges them into an immutable overlay on top of the base CSR. Readers always see a consistent snapshot via volatile reference swap. When the overlay grows past a configurable threshold (default: 10,000 edges), a background compaction rebuilds the full CSR — transparently, with no downtime.

The Benchmarks

Internal Benchmark: OLTP vs OLAP

Graph: 500K vertices, ~8M edges

| Benchmark | ArcadeDB OLTP | ArcadeDB OLAP | Speedup |

|---|---|---|---|

| 1-hop count | 3.0 µs | 0.8 µs | 3.8x |

| 1-hop IDs | 5.0 µs | 1.0 µs | 5.1x |

| 2-hop | 31.3 µs | 3.3 µs | 9.4x |

| 3-hop | 418.3 µs | 35.1 µs | 11.9x |

| 4-hop | 6,089 µs | 390.1 µs | 15.6x |

| 5-hop | 92,497 µs | 2,765 µs | 33.5x |

| Shortest Path | 165 ms/pair | 3.1 ms/pair | 54.0x |

| PageRank (20 iter) | 54,094 ms | 117 ms | 462.3x |

| Connected Components | 2,238 ms | 60 ms | 37.3x |

| Label Propagation | 33,450 ms | 142 ms | 235.6x |

Benchmarked on a MacBook Pro M5 Pro (2026), 48 GB RAM, 1 TB disk.

The OLAP engine dominates across the board, especially on full-graph algorithms. PageRank goes from nearly a minute to 117 milliseconds — a 462.3x speedup. Connected Components is 37.3x faster, and Label Propagation 235.6x faster. The deeper the traversal, the bigger the advantage.

LDBC Graphalytics: ArcadeDB vs the Competition

We ran the standard LDBC Graphalytics benchmark framework against other graph databases. Results include both embedded mode (in-process Java) and Docker container (same conditions as Neo4j, Memgraph, FalkorDB, and HugeGraph). The results speak for themselves:

| Algorithm | ArcadeDB Embedded | ArcadeDB Docker | Neo4j 2026 | Kuzu | DuckPGQ | ArangoDB | FalkorDB | HugeGraph | Winner |

|---|---|---|---|---|---|---|---|---|---|

| Load | 55.34s | 35.30s | 653.41s | 3.56s | 0.35s | 352.47s | 1568.36s | 15.27s | DuckPGQ |

| PageRank | 0.10s | 0.40s | 3.50s | 0.97s | 0.83s | 47.58s | 0.70s | 1.23s | ArcadeDB |

| WCC | 0.08s | 0.17s | 0.32s | 0.10s | 1.95s | 25.83s | 0.58s | 2.00s | ArcadeDB |

| BFS | 0.09s | 0.30s | 0.58s | 0.29s | N/A | N/A | 0.05s | 0.17s | FalkorDB |

| LCC | 2.35s | 2.51s | 15.47s | N/A | 10.79s | N/A | N/A | 122.44s | ArcadeDB |

| SSSP | 0.92s | 0.41s | N/A | N/A | N/A | 33.36s | N/A | N/A | ArcadeDB |

| CDLP | 1.11s | 1.35s | N/A | N/A | N/A | 126.63s | 1.58s | 23.88s | ArcadeDB |

ArcadeDB leads on 5 out of 6 algorithms — both in embedded mode and as a Docker container. The only exception is BFS, where FalkorDB edges ahead (0.05s vs 0.09s). Even running as a Docker container — with network serialization overhead, HTTP API, and Docker Desktop’s VM layer — ArcadeDB remains the fastest on every other algorithm. The Docker results are measured warm (JIT-compiled), matching how production servers run. Results are fully reproducible — see the benchmark project on GitHub.

Memgraph crashed on connected components. Investigating further, we found 50 open issues on their GitHub reporting random crashes triggered even by simple queries, some of which have remained unaddressed for over 3 years.

LSQB: Subgraph Pattern Matching

We also ran the LSQB (Labelled Subgraph Query Benchmark) — a microbenchmark from the LDBC council that focuses on subgraph pattern matching: counting how many times a given labelled graph pattern appears in a dataset. It tests multi-way joins, anti-patterns (NOT EXISTS), and complex multi-hop chains using 9 Cypher queries on the LDBC SNB social network (SF1: ~3.9M vertices, ~17.9M edges).

We compared ArcadeDB against 7 other systems — graph databases (Kuzu, Neo4j, Memgraph, Dgraph), relational engines (DuckDB, PostgreSQL), and ArcadeDB itself as a Docker container. All Docker-based systems run under the same conditions (Docker Desktop for macOS):

| Query | Pattern | Expected Count | ArcadeDB Embedded | ArcadeDB Docker | DuckDB | Kuzu | Neo4j | PostgreSQL | Memgraph | Dgraph | Winner |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Q1 | 8-hop chain | 221,636,419 | 0.23s | 0.25s | 0.15s | 5.83s | 8.25s | 6.56s | 60.45s | 2.52s | DuckDB |

| Q2 | Diamond | 1,085,627 | 0.18s | 0.19s | 0.02s | 0.14s | 2.06s | 0.34s | timeout | N/A | DuckDB |

| Q3 | Triangle | 753,570 | 0.10s | 0.13s | 0.05s | 2.44s | 14.31s | 2.12s | timeout | N/A | DuckDB |

| Q4 | Star join | 14,836,038 | 0.03s | 0.03s | 0.08s | N/A | 7.82s | 6.86s | 4.50s | 8.13s | ArcadeDB |

| Q5 | Fork | 13,824,510 | 0.29s | 0.23s | 0.04s | N/A | 6.72s | 0.69s | 3.86s | N/A | DuckDB |

| Q6 | 2-hop traversal | 1,668,134,320 | 0.11s | 0.11s | 2.18s | 1.41s | 52.06s | 17.72s | 148.14s | N/A | ArcadeDB |

| Q7 | Star (optional) | 26,190,133 | 0.09s | 0.02s | 0.08s | N/A | 10.45s | 11.22s | 5.59s | 5.97s | ArcadeDB |

| Q8 | Anti-pattern | 6,907,213 | 0.19s | 0.19s | 0.07s | N/A | 12.91s | 1.31s | 3.37s | N/A | DuckDB |

| Q9 | Anti-pattern + traversal | 1,596,153,418 | 1.18s | 1.06s | 7.77s | 6.15s | 59.09s | 22.25s | timeout | N/A | ArcadeDB |

All 9 queries produce correct results matching the official LSQB expected output. Kuzu skips Q4/Q5/Q7/Q8 (no :Message supertype support). Memgraph times out on Q2/Q3/Q9 (600s limit).

ArcadeDB is the fastest system on 4 out of 9 queries (Q4, Q6, Q7, Q9). Q4 and Q7 are star-shaped joins centered on a Message node — with the GAV’s CSR acceleration, ArcadeDB completes these in 10–30ms, 3–8x faster than DuckDB and 261–1045x faster than Neo4j. Q6 and Q9 are multi-hop path traversals where ArcadeDB is 7–20x faster than DuckDB, 55–473x faster than Neo4j, and 21–161x faster than PostgreSQL. Q6 in particular showcases the edge-scan algebraic optimization: ArcadeDB computes the 1.67-billion-row count in just 110ms — 20x faster than DuckDB.

Where DuckDB wins: The remaining queries (Q1, Q2, Q3, Q5, Q8) are join-intensive patterns — long chains, diamonds, forks, and anti-patterns — where DuckDB’s columnar vectorized execution excels. However, the gap has narrowed significantly: Q8 is now only 2.7x slower than DuckDB (down from 7.7x), thanks to the edge-scan anti-join optimization.

ArcadeDB Docker vs other Docker systems (apples-to-apples): Even with HTTP + network + Docker VM overhead, ArcadeDB Docker is 10–1045x faster than Neo4j, 2–24x faster than PostgreSQL, and 5–559x faster than Memgraph on the queries Memgraph completes. The Docker overhead is minimal (0.01–1.08s) because the GAV/CSR does the heavy lifting, not the transport.

The other graph databases: Neo4j is 10–1045x slower than ArcadeDB on every query. Memgraph times out on 3 queries and is 5–559x slower on the ones it completes. Kuzu can’t run 4 of 9 queries due to missing type hierarchy support, and is 2–28x slower than ArcadeDB on most of the rest (though Kuzu edges ahead on Q2 at 0.14s vs 0.18s). PostgreSQL is faster than all other graph databases but still 2–161x slower than ArcadeDB.

Dgraph v25.3.0 has no native pattern matching language (DQL is a hierarchical traversal language, not Cypher/SQL). Through creative use of DQL value-variable propagation and math(), we managed to express 3 of 9 queries — but Dgraph is 11x slower than ArcadeDB on Q1 (2.52s vs 0.23s), 271x slower on Q4 (8.13s vs 0.03s), and 597x slower on Q7 (5.97s vs 0.01s). The remaining 6 queries are fundamentally impossible in DQL (no JOINs, no self-joins, no anti-joins).

SurrealDB v2.6.4 fares even worse: queries are written for 5 of 9 patterns using nested subqueries with $parent dereferencing, but every single one times out at 120 seconds. The O(n*m) nested loop execution without index acceleration is simply too slow for 3.9M vertices. The remaining 4 queries cannot be expressed in SurrealQL at all (no table aliases for self-joins).

The takeaway: ArcadeDB’s CSR engine beats every other graph database on every single query except Q2 where Kuzu is marginally faster — both embedded and as a Docker container — and beats DuckDB on the graph-shaped queries. Databases that claim “graph capabilities” (Dgraph, SurrealDB) can barely express these patterns, let alone execute them competitively. And unlike DuckDB, ArcadeDB gives you ACID transactions, persistence, concurrent access, and a full graph query language on top.

Memory: Compact by Design

You might expect an OLAP layer to be a memory hog. The opposite is true. For the 500K vertex / 8M edge benchmark graph:

- GAV (OLAP): 138.4 MB

- OLTP estimate: ~1.2 GB

- Compression ratio: 9.0x more compact

The CSR encoding uses ~8 bytes per edge, node ID mapping takes ~8 bytes per vertex, and columnar properties use 4–8 bytes per vertex per column. String properties are dictionary-encoded, and null bitmaps cost just 1 bit per vertex per column.

In practice, enabling Graph OLAP adds a fraction of the memory your OLTP data already uses.

70 Built-in Graph Algorithms — All OLAP-Optimized

All 70 graph algorithms ship fully optimized for the Graph OLAP Engine, operating directly on CSR arrays with zero GC pressure and multi-threaded execution. When a GAV is available, every algorithm automatically uses the OLAP path — no configuration needed.

| Category | Algorithms |

|---|---|

| Path Finding | Shortest Path, A*, Bellman-Ford, Dijkstra, Dijkstra Single Source, Duan SSSP, All Pairs Shortest Path (APSP), All Simple Paths, K Shortest Paths, Longest Path DAG, Steiner Tree |

| Traversal | BFS, DFS |

| Centrality | Degree, Closeness, Betweenness, Eigenvector, Harmonic, Eccentricity, HITS, Katz, ArticleRank |

| Ranking | PageRank, Personalized PageRank, VoteRank |

| Community Detection | Label Propagation, Louvain, Leiden, Strongly Connected Components (SCC), Weakly Connected Components (WCC), Biconnected Components, SLPA |

| Link Prediction | Adamic-Adar, Common Neighbors, Jaccard Similarity, Preferential Attachment, Resource Allocation, SimRank |

| Clustering & Partitioning | Local Clustering Coefficient, Triangle Count, Hierarchical Clustering, K-Means, Graph Coloring, Bipartite Check, Bipartite Matching |

| Subgraph Analysis | Clique, K-Core, K-Truss, Densest Subgraph, Articulation Points, Bridges |

| Spanning Trees | Minimum Spanning Tree (MST), Min Spanning Arborescence |

| Network Flow | Max Flow, Max K-Cut |

| Graph Metrics | Assortativity, Conductance, Modularity Score, Rich Club, Graph Summary, Total Neighbors, Same Community |

| ML & Embeddings | Random Walk, Node2Vec, FastRP, GraphSAGE, HashGNN, Influence Maximization |

| Other | Cycle Detection, Topological Sort |

Getting Started

SQL

-- Create a view over your social graph

CREATE GRAPH ANALYTICAL VIEW social

VERTEX TYPES (Person, Company)

EDGE TYPES (FOLLOWS, WORKS_AT)

PROPERTIES (name, age, status)

UPDATE MODE SYNCHRONOUS

-- That's it. Your Cypher queries are now accelerated automatically.

-- You can also use edge properties for weighted algorithms:

CREATE GRAPH ANALYTICAL VIEW weighted

VERTEX TYPES (City)

EDGE TYPES (ROAD)

EDGE PROPERTIES (distance, toll)

UPDATE MODE SYNCHRONOUS

Java API

GraphAnalyticalView gav = GraphAnalyticalView.builder(database)

.withName("social")

.withVertexTypes("Person", "Company")

.withEdgeTypes("FOLLOWS", "WORKS_AT")

.withProperties("name", "age", "status")

.withUpdateMode(UpdateMode.SYNCHRONOUS)

.build();

// Run algorithms directly on the OLAP engine

GraphAlgorithms algos = new GraphAlgorithms();

double[] ranks = algos.pageRank(gav, 20, 0.85);

int[] components = algos.connectedComponents(gav);

Managing Views

-- Change update mode on the fly

ALTER GRAPH ANALYTICAL VIEW social UPDATE MODE ASYNCHRONOUS

-- Force a rebuild

REBUILD GRAPH ANALYTICAL VIEW social

-- Drop when no longer needed

DROP GRAPH ANALYTICAL VIEW social

Named views are persisted in the schema and automatically restored on database restart.

The Zero-Compromise Philosophy

Most databases force you to choose: fast transactions or fast analytics. Export your data to a separate system, maintain two clusters, deal with synchronization lag.

ArcadeDB’s Graph OLAP Engine rejects that tradeoff entirely:

- Your OLTP engine stays exactly as it is — same speed, same ACID guarantees, same API

- Turn on a GAV, and analytical queries get up to 462x faster automatically

- Synchronization is configurable — real-time, async, or manual, your choice

- Memory overhead is minimal — the OLAP representation is 9.0x more compact than OLTP

- No query changes — the optimizer handles everything transparently

We were already the fastest OLTP graph database. Now we’re the fastest OLAP graph database too.

The Graph OLAP Engine is available from ArcadeDB v26.3.2. For detailed documentation, visit docs.arcadedb.com.

| Try ArcadeDB: GitHub | Docker Hub | Documentation |